Idle Words > Talks > Haunted By Data

This is the text version of a talk I gave on October 1, 2015, at the Strata+Hadoop World conference in New York City. The video version is here (20 mins).

|

Haunted By Data |

|

In preparing this talk I decided to check out the data landscape, since I hadn't seen it for a while. The terminology around Big Data is surprisingly bucolic. Data flows through streams into the data lake, or else it's captured in logs. A data silo stands down by the old data warehouse, where granddaddy used to sling bits. And high above it all floats the Cloud. Then this stuff presumably flows into the digital ocean. |

|

I would like to challenge this picture, and ask you to imagine data not as a pristine resource, but as a waste product, a bunch of radioactive, toxic sludge that we don’t know how to handle. |

|

In particular, I'd like to draw a parallel between what we're doing and nuclear energy, another technology whose beneficial uses we could never quite untangle from the harmful ones. |

|

A singular problem of nuclear power is that it generated deadly waste whose lifespan was far longer than the institutions we could build to guard it. Nuclear waste remains dangerous for many thousands of years. |

|

This oddity led to extreme solutions like 'put it all in a mountain' and 'put a scary sculpture on top of it' so that people don't dig it up and eat it. But we never did find a solution. We just keep this stuff in swimming pools or sitting around in barrels. |

|

The data we're collecting about people has this same odd property. Tech companies come and go, not to mention the fact that we share and sell personal data promiscuously.

But information about people retains its power as long as those people are alive, and sometimes as long as their children are alive. No one knows what will become of sites like Twitter in five years or ten. But the data those sites own will retain the power to hurt for decades. |

|

Nuclear waste comes in two flavors. There is the spent nuclear fuel, which is extremely radioactive but concentrated, and then low-grade waste, things like coveralls or contaminated topsoil, that is much less dangerous but bulky. Similarly, we have data that we treat as epecially sensitive, like financial and medical records. But then there’s this vast bulk of other data, which it turns out is also packed full of secrets. |

|

Your fitness tracker might reveal that you're having an affair on your lunch hour. More and more devices are location-aware, or talk to devices that are location-aware. This lets you recreate people's movements decades after the fact, something we've never been able to do before. If you have a predictive search engine, it stores all kinds of things that people paste into their text fields by accident, including passwords. And you probably don’t know this. The whole point of having a data lake is so you can just chuck things in there and go fishing for patterns later. |

|

In a world where everything is tracked and kept forever, like the world we're for some reason building, you become hostage to the worst thing you've ever done. Whoever controls that data has power over you, whether or not they exercise it. And yet we treat this data with the utmost carelessness, as if it held no power at all. |

|

Eric Schmidt of Google suggests that one way to solve the problem is to never do anything that you don’t want made public.

But sometimes there's no way to know ahead of time what is going to be bad. |

|

In the forties, the Soviet Union was our ally. We were fighting Hitler together! It was fashionable in Hollywood to hang out with Communists and progressives and other lefty types. Ten years later, any hint of Communist ties could put you on a blacklist and end your career. Some people went to jail for it. Imagine if we had had Instagram back then. |

|

Closer to our time, consider the hypothetical case of a gay blogger in Moscow who opens a LiveJournal account in 2004, to keep a private diary.

In 2007 LiveJournal is sold to a Russian company, and a few years later—to everyone's surpise—homophobia is elevated to state ideology. Now that blogger has to live with a dark pit of fear in his stomach. |

|

Remember that for most people in the world, the government and police are someting to be afraid of.

Even right here in New York City, the NYPD is conducting intensive online surveillance of black kids based on what block they live on. They've put up scary surveillance towers at mosques. |

|

Former CIA and NSA director Michael Hayden, who is doing his best to resemble a Bond villain, has said “we kill people based on metadata.”

So when you commit to collecting personal data, and storing it forever, you're making a serious decision. |

|

I don't mean to pick on any particular government. In the long run, all governments are evil.

You might have a Golden Age that lasts for years, but at some point you wind up with a Caligula or Stephen Harper. It's not even necessary to invent exotic scenarios where America becomes a dictatorship. All you have to do is ask yourself four letters: WWND. |

|

“What would Nixon do?”

This is a man so diabolical he didn’t just wiretap journalists and citizens—he wiretapped himself! A man who hired psychopaths to bomb the Brookings Institute, and hired different psychopaths to steal the psychiatric records of one of his enemies. Nixon's crimes are an example of the worst kinds of abuse of domestic surveillance. And they're well within the time horizon of the kind of data we're all storing. |

|

I want you to go through a visualization exercise with me. Really imagine it. Nixon's in your datacenter. He's got his laptop open. He's logged in! He's got root! What does he find? If you didn't break into a cold sweat at the thought, congratulations. You are a good steward of data. But if Tricky Dick in your data center scares you, then consider what you're doing. |

|

Information is power. Just like we could never separate the peaceful uses of atomic energy from the bad, there's no way to have different kinds of mass surveillance.

Our friend from the CIA* told us this morning that government is eager to adopt our worst practices. We teach them how to spy on us. When they want the data badly enough, they just come and take it. And the fact that we do all this data collection, and that we so promiscuously share it, gives governments cover to do the same. They would never dare do it on this scale if we weren't doing the same thing. We're enabling. *This refers to a keynote by Douglas Wolfe, Chief Information Officer of the CIA, who spoke earlier that morning. His talk included the line "the truth will set you free." |

|

So that's the harm that surveillance culture does to others. But collecting all this data hurts us, too. |

|

You can't just set up an elaborate surveillance infrastructure and then decide to ignore it.

These data pipelines take on an institutional life of their own, and it doesn't help that people speak of the "data driven organization" with the same religious fervor as a "Christ-centered life". The data mindset is good for some questions, but completely inadequate for others. But try arguing that with someone who insists on seeing the numbers. The promise is that enough data will give you insight. Retain data indefinitely, maybe waterboard it a little, and it will spill all its secrets. |

|

There's a little bit of a con going on here. On the data side, they tell you to collect all the data you can, because they have magic algorithms to help you make sense of it. On the algorithms side, where I live, they tell us not to worry too much about our models, because they have magical data. We can train on it without caring how the process works. The data collectors put their faith in the algorithms, and the programmers put their faith in the data. At no point in this process is there any understanding, or wisdom. There’s not even domain knowledge. Data science is the universal answer, no matter the question. |

|

Every Hadoop cluster should be required by law to come with a framed photograph of Robert McNamara. Here was a smart and decent man who spent so much time looking at a dashboard that he never bothered to look out the window. And the result was disaster. We have no better example of the limits of data science.

|

|

I worry one reason we haven't learned from the fiasco of systems analysis and data worship in the sixties is that the story is just anectodal. It’s only a single data point. But the lesson is there for anyone willing to look. |

|

Let me tell you one last scary story—that Big Data may actually make things worse. |

|

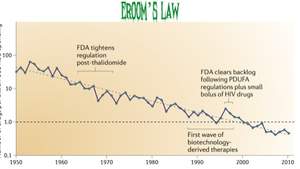

The pharmaceutical industry has something called Eroom's Law (which is ‘Moore’s Law’ spelled backwards). It's the observation that the number of drugs discovered per billion dollars in research has dropped by half every nine years since 1950. The graph in this slide has a log axis, so it's really an exponential decline. |

|

This is astonishing, because the entire science of biochemistry has developed since 1950. Every step of the drug discovery pipeline has become more efficient, some by orders of magnitude, and yet overall the process is eighty times less cost-effective. |

|

A chain-smoking chemist injecting random things into mice is provably a better research investment than a genomics data center. You might think of some reasons why this is happening. Maybe all the easy drugs were found first, or the regulatory environment is much stricter than it used to be. But these excuses don't hold up to scrutiny. In the worst case, they might have blunted the impact of the breakthroughs, slowed the rate of improvement. But how did things get eighty times worse? |

|

This has been a bitter pill to swallow for the pharmacological industry. They bought in to the idea of big data very early on. The growing fear is that the data-driven approach is inherently a poor fit for life science. In the world of computers, we learn to avoid certain kinds of complexity, because they make our systems impossible to reason about. But Nature is full of self-modifying, interlocking systems, with interdependent variables you can't isolate. In these vast data spaces, directed iterative search performs better than any amount of data mining. My contention is that many of you doing data analysis on the real world will run into similar obstacles, hopefully not at the same cost as pharmacology. |

|

The ultimate self-modifying, adaptive system is any system that involves people. In other words, the kind of thing most of you are trying to model. Once you’re dealing with human behavior, models go out the window, because people will react to what you do. In Soviet times, there was the old anecdote about a nail factory. In the first year of the Five-Year Plan, they were evaluated by how many nails they could produce, so they made hundreds of millions of uselessly tiny nails. |

|

Seeing their mistake, the following year the planners decided to evaluate them by weight, so they just made a single giant nail. |

|

A more recent and less fictitious example is electronic logging devices on trucks. These are intended to limit the hours people drive, but what do you do if you're caught ten miles from a motel? The device logs only once a minute, so if you accelerate to 45 mph, and then make sure to slow down under the 10 mph threshold right at the minute mark, you can go as far as you want. So we have these tired truckers staring at their phones, bunny-hopping down the freeway late at night. Of course there's an obvious technical countermeasure. You can start measuring once a second. Notice what you're doing, though. Now you're in an adversarial arms race with another human being that has nothing to do with measurement. It's become an issue of control, agency and power. You thought observing the driver’s behavior would get you closer to reality, but instead you've put another layer between you and what's really going on. These kinds of arms races are a symptom of data disease. We've seen them reach the point of absurdity in the online advertising industry, which unfortunately is also the economic cornerstone of the web. Advertisers have built a huge surveillance apparatus in the dream of perfect knowledge, only to find themselves in a hall of mirrors, where they can't tell who is real and who is fake. |

|

I think of this as the Jetsons fallacy, where you imagine you can transform the world with technology without changing anything about people's behavior. Of course the world reacts and changes shape in ways no one person can anticipate. |

|

Here's the bottom line: your industry is too scary. |

|

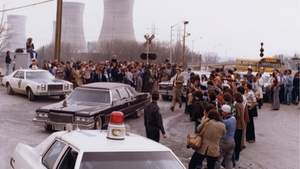

We haven't broken ground on a new nuclear plant in the United States since 1973. Even now that we have safe reactor designs that can't melt down, and are desperately in need energy sources that don't put carbon in the atmosphere, none of this matters, because people got spooked. They were lied to by boosters of the technology, and then they saw men in bunny suits walking along a Pennsylvania island, and it was all over. |

|

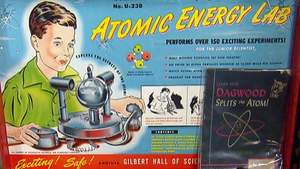

Back in the day, radioactivity used to be cool! One of the first things we learned about it was that it fought cancer! |

|

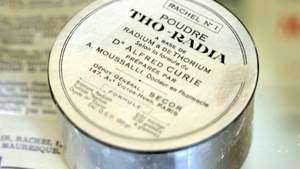

At one point we had radioactive makeup. |

|

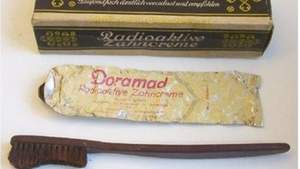

Radioactive toothpaste. |

|

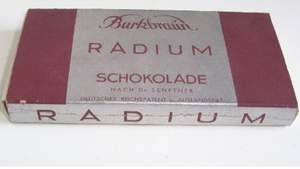

Delicious radium chocolate. |

|

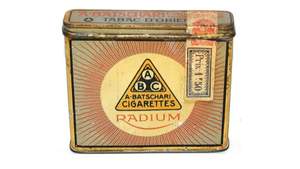

Who can forget the smooth, rich taste of radium cigarettes? |

|

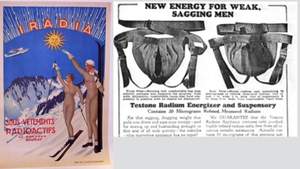

Radium condoms that glowed in the dark. |

|

And of course, radium underpants. We're very much in the 'radium underpants' stage of the surveillance economy. A lot of the hype is silly, some of what we're doing is useful, and some of it is downright harmful. There are those of you in the audience who are definitely working on radium cigarettes. |

|

You're thinking, okay Maciej, your twelve minutes of sophistry and labored analogies have convinced me that my entire professional life is a lie. What should I do about it? I hope to make you believe data collection is a trade-off. It hurts the people whose data you collect, but it also hurts your ability to think clearly. Make sure that it's worth it! I'm not claiming that the sponsors of this conference are selling you a bill of goods. I'm just heavily implying it. Here's what I want you do specifically: |

|

Don't collect it! If you can get away with it, just don't collect it! Just like you don't worry about getting mugged if you don't have any money, your problems with data disappear if you stop collecting it. Switch from the hoarder's mentality of 'keep everything in case it comes in handy' to a minimalist approach of collecting only what you need. Your marketing team will love you. They can go tell your users you care about privacy! |

|

If you have to collect it, don't store it! Instead of stocks and data mining, think in terms of sampling and flows. "Sampling and flows" even sounds cooler. It sounds like hip-hop! You can get a lot of mileage out of ephemeral data. There's an added benefit that people will be willing to share things with you they wouldn't otherwise share, as long as they can believe you won't store it. All kinds of interesting applications come into play. |

|

If you have to store it, don't keep it! Certainly don't keep it forever. Don't sell it to Acxiom! Don't put it in Amazon glacier and forget it. I believe there should be a law that limits behavioral data collection to 90 days, not because I want to ruin Christmas for your children, but because I think it will give us all better data while clawing back some semblance of privacy. |

|

Finally, don't be surprised. The current model of total surveillance and permanent storage is not tenable. If we keep it up, we'll have our own version of Three Mile Island, some widely-publicized failure that galvanizes popular opinion against the technology. At that point people who are angry, mistrustful, and may not understand a thing about computers will regulate your industry into the ground. You'll be left like those poor saps who work in the nuclear plants, who have to fill out a form in triplicate anytime they want to sharpen a pencil. You don't want that. Even I don't want that. We can have that radiant future but it will require self-control, circumspection, and much more concern for safety that we've been willing to show. It's time for us all to take a deep breath and pull off those radium underpants. |

|

Thank you very much for your time, and please enjoy the rest of your big data conference.

STOCHASTIC, EXPONENTIALLY DECAYING APPLAUSE |